Part One: The Mistake That Changed Everything

Emma Chen never thought of herself as a data source. She was just tired.

It was 3:47 AM on a Tuesday. Her newborn had finally stopped crying after two straight hours of wailing. Her eyes burned like someone had poured sand into them. Her shoulders ached from bouncing the baby up and down in the dark hallway. She had tried meditation apps. She had tried white noise machines. She had tried that expensive magnesium spray her sister swore would change her life. Nothing worked.

Then she saw the ad on her phone. It was a video, crisp and clean, with soft piano music in the background. A woman who looked like she had never been tired in her life was smiling while putting on a sleek gray headband.

The text on the screen read: “NeuroBand – The first wearable that reads your brain’s tired signals. Fall asleep 2x faster. Wake up sharp.”

The video showed the headband from every angle. Three small sensors pressed gently against the forehead and behind the ears. No wires. No sticky gels. No doctor visits. Just pure, easy brain tracking.

The narrator had a calm, trustworthy voice. “It’s like a Fitbit for your mind,” she said. “We analyze your theta and delta waves to find your perfect sleep window. You don’t have to guess anymore. Your brain will tell you exactly what it needs.”

Emma clicked Buy Now. $199. Free shipping. She didn’t read the reviews. She didn’t compare other brands. She just wanted to sleep.

The package arrived two days later in a beautiful white box with gold lettering. Inside was the headband, a magnetic charging cable, and a small card that said: “Welcome to the future of rest. Download the app to begin.”

That night, Emma put on the headband. It felt strange at first – like wearing a soft helmet for a tiny brain. But after a few minutes, she forgot it was there. She fell asleep within fifteen minutes. That hadn’t happened in months.

The next morning, she opened the NeuroBand app. A beautiful color-coded chart filled her phone screen. Deep sleep: 2 hours and 4 minutes. REM sleep: 1 hour and 47 minutes. Focus score for the day: 73 percent – Good! There was even a personalized tip: “Try sleeping one hour earlier tonight, Emma. Your brain shows early signs of fatigue starting at 9 PM.”

She felt seen. For the first time in months, she felt like something understood what was happening inside her head. She started sharing her sleep scores with her mom group on WhatsApp. Three of her friends bought NeuroBands the same week.

For three weeks, it was magic. Emma’s mood improved. Her patience with the baby got better. She even had enough energy to cook dinner two nights in a row – a small victory, but a real one.

She never thought twice about the privacy policy. Nobody does. Privacy policies are written in tiny gray text that lawyers designed to be unreadable. Emma had clicked “Accept” without scrolling past the first paragraph. She was tired. She just wanted to see her sleep chart.

But six months later, Emma got an email that made her blood run cold. It wasn’t from NeuroBand. It was from her health insurance provider – a big company with a cheerful blue-and-white logo that usually sent her flyers about free flu shots.

The subject line read: “Congratulations, Emma! You’ve been pre-approved for a Wellness Rewards plan.”

She opened it. The message was friendly, almost bubbly:

“Dear Emma, our new AI health system has detected that your neural recovery patterns suggest a higher likelihood of future anxiety disorders. We want to help you stay ahead of your health! You’ve been pre-approved for our premium mental wellness plan – only $89 per month (regularly $210). This plan covers virtual therapy sessions, medication management, and 24/7 crisis support. Click here to accept. If you do not want coverage adjustments, click here to opt out.”

Emma read the email three times. Her hands started shaking.

She had never told her insurance company about her sleep troubles. She had never mentioned that her mother had panic attacks. She had never given anyone permission to share her brain data.

But her brain had.

She called the insurance company. After forty-five minutes on hold, she finally reached a representative named Marcus who spoke in a calm, rehearsed voice.

“Ma’am, we purchased anonymized neural insights from a third-party data aggregator,” Marcus said. “Your NeuroBand data was part of a research pool. Our algorithms identified patterns consistent with prodromal anxiety. That means early signs before symptoms appear.”

“But I don’t have anxiety,” Emma said.

“The algorithm disagrees,” Marcus replied. “Would you like to enroll in the wellness plan?”

Emma hung up. She sat in her kitchen, staring at the gray headband on her nightstand. It looked so innocent. So helpful. So harmless.

That was the moment she decided to fight back. She didn’t know how. She didn’t know the laws. But she knew one thing for certain: her brain was hers, and she had never sold it.

Part Two: Meet Carlos – The College Student Who Lost a Job Offer

While Emma was still trying to understand what happened, a twenty-two-year-old college senior named Carlos was about to get his own rude awakening.

Carlos was a straight-A student majoring in computer engineering. He had attention deficit hyperactivity disorder – ADHD – and he had learned to manage it without medication. He used a popular focus band called AuraMind that cost $179. The band had a small vibrating motor inside that buzzed against his forehead whenever his attention drifted.

He wore it during study sessions, during lectures, and even during exams. The AuraMind app gave him a “focus score” every hour. He loved competing against himself to beat his personal best.

In his senior year, Carlos applied for a dream job at a large tech company. The job paid $95,000 to start. It came with free lunches, a gym membership, and stock options. He made it through three rounds of interviews. The recruiters loved him. His future boss said he was “the most prepared candidate we’ve seen all year.”

Then came the final step: a cognitive assessment. The company used a third-party service called MindScreen. Carlos had to wear his AuraMind band during a two-hour online test. The test measured how quickly he responded to visual puzzles, how long he could maintain focus, and how his brain waves changed when he got frustrated.

Carlos did well on the puzzles. He answered ninety-two percent correctly. But the MindScreen report – which he was never allowed to see – told a different story.

Weeks later, the recruiter called. “Carlos, we’ve decided to move forward with other candidates.”

“Why?” he asked. “I thought the interviews went great.”

There was a long pause. Then the recruiter said, “I shouldn’t tell you this, but your cognitive assessment showed elevated neural variability associated with impulse control. It’s not a medical diagnosis. It’s just… our algorithm prefers more stable patterns.”

Carlos hung up. He opened his AuraMind app. He went to the settings menu. He clicked on “Privacy” and then “Data Sharing.” It was already turned on. The setting said: “Allow anonymous sharing for research and commercial partnerships.”

He turned it off. But it was too late. His neural data had already been sold, analyzed, and used against him.

He never got that job. Two months later, he saw the position reposted online. Someone else was sitting at that desk. Someone with “more stable” brain waves.

Carlos started a spreadsheet that night. He listed every focus band and sleep band he could find. He went through each privacy policy line by line. He highlighted the sentences that allowed data selling. By the time he finished, he had forty-seven pages of notes.

He posted the spreadsheet on a forum for students with ADHD. Within a week, five hundred people had added their own findings. They called it the Neural Data Watchlist.

Part Three: What Are Neural Patterns – And Why Do Companies Want Them?

Let’s back up. Because if you don’t understand what a neural pattern is, you won’t understand why this is a bigger deal than a stolen credit card number or a hacked social media account.

Your Brain Leaves a Fingerprint Every Second of Every Day

Imagine your brain is a giant city. A city with 86 billion residents – those residents are your neurons. During the day, the city has busy highways with cars zooming everywhere. That’s your beta waves – focus, alertness, problem-solving. At night, the city has quiet residential streets where almost no one drives. That’s your delta waves – deep, dreamless sleep. And sometimes, right before you fall asleep or right as you wake up, there are strange traffic jams where cars move slowly and randomly. That’s your theta waves – drowsiness, daydreaming, creativity.

A wearable sleep or focus band uses tiny metal sensors to listen to the electrical conversations between your neurons. Each conversation is incredibly faint – we’re talking about one millionth of a volt. But the sensors are sensitive enough to pick up those whispers.

Here’s what the band can hear:

How quickly you get tired. Your brain waves slow down in a very specific pattern when you’ve been awake for too long. The band can detect that pattern up to forty-five minutes before you actually feel sleepy.

How deep your sleep actually is. You might think you slept for eight hours, but your brain might have only gotten ninety minutes of deep sleep. The band knows the difference.

Whether you have micro-awakenings. These are moments when you wake up for two or three seconds and then fall back asleep. You never remember them. But the band counts every single one.

Your baseline attention span. The band can measure how long you can focus on a task before your mind starts to wander. It knows your personal limit within a few seconds of accuracy.

Early signs of brain fog or stress. Before you feel stressed, your brain waves change. The band can see those changes hours before you notice anything wrong.

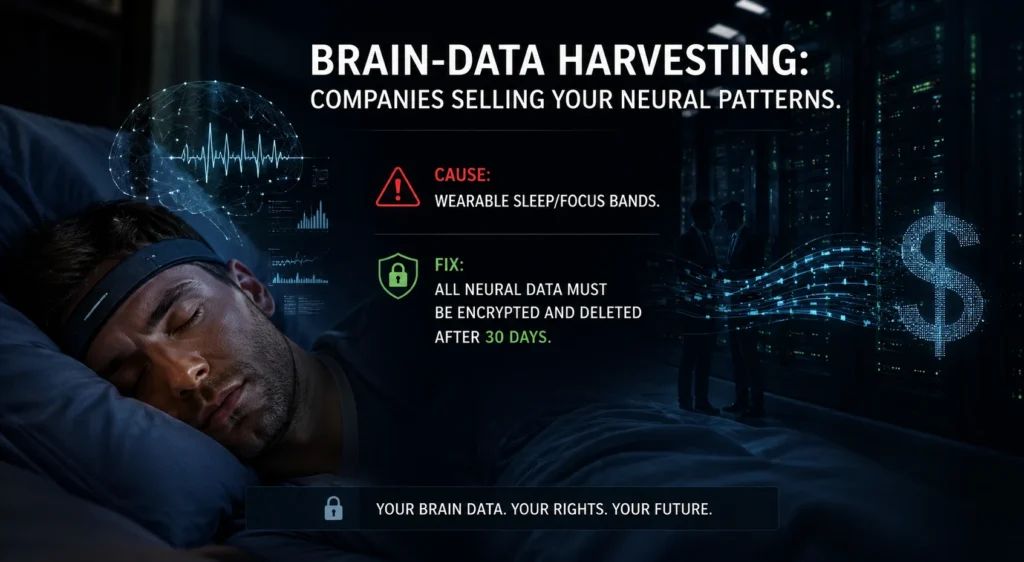

Now, here’s the part the ads don’t tell you. That data is incredibly valuable – not to you, but to everyone else. Companies don’t sell headbands because they love helping people sleep. They sell headbands because brain data is the new oil. It’s the new gold. It’s the new credit score that you never agreed to have.

The Three Buyers You Never Met

Let me introduce you to the people who are buying your brain waves right now, while you read this sentence.

First Buyer: Insurance Companies

Insurance companies exist to make money. They make money by collecting premiums and paying out as few claims as possible. If an insurance company knows that your brain patterns look like someone who will develop depression, anxiety, or even Alzheimer’s disease in the next five years, they have two choices. They can raise your rates now. Or they can deny you coverage completely.

They don’t need a doctor’s diagnosis. They don’t need a medical record. They just need a prediction from an algorithm. And predictions from brain data are getting more accurate every year.

In 2023, a research team in Switzerland published a study showing that sleep band data could predict major depressive episodes with eighty-five percent accuracy. That’s higher than most doctors can achieve with a face-to-face interview. The insurance industry noticed immediately.

Second Buyer: Employers

Some companies already offer “wellness stipends” for focus bands. They’ll give you two hundred dollars to buy a band, and in return, you agree to share your “aggregated team data.” Aggregated sounds safe, right? It means they combine everyone’s data so no single person can be identified.

But aggregated data is not as anonymous as companies claim. With enough data points – and these bands generate millions of data points per person per week – you can start to identify individuals. Your brain waves are as unique as your fingerprint. A study from 2020 showed that researchers could re-identify people from their brain data with ninety-two percent accuracy using just five minutes of recording.

Your employer might not care about your individual sleep score today. But what about tomorrow? What about the day they need to decide who gets promoted and who gets laid off?

Third Buyer: Advertisers

This is the scariest one. A startup in California has already patented a method to serve you ads based on your “cognitive vulnerability window.” That’s a fancy way of saying: they wait until your brain shows that you’re tired, stressed, or unfocused. Then they show you an impulse-buy ad for something you don’t need.

Think about the last time you shopped online at midnight. You probably bought things you wouldn’t have bought at noon. That’s because your brain’s decision-making centers get tired just like your muscles do. Advertisers know this. They’ve always known this. But before brain bands, they had to guess when you were tired. Now they don’t have to guess. They can know.

There’s a company called AdNeuro that sells “neurally-targeted ad slots.” They guarantee that your ad will be shown only to people whose brain waves show low resistance and high receptivity. In plain English: they show your ad to people who are too tired to say no.

Emma’s insurance email wasn’t a glitch. Carlos’s lost job offer wasn’t a mistake. These are the first warning signs of a system that is already running, already selling, already profiling – and almost completely invisible.

Part Four: The Secret Life of Your Sleep Data – A Step-by-Step Journey

Let me walk you through exactly what happens from the moment you put on a sleep band to the moment your brain waves end up in a data broker’s database. I’m going to use a real brand name here – let’s call it ZennBand – because this is exactly how dozens of bands work right now.

Step One: You Put On the Band

It’s 10:30 PM. You’ve had a long day. You charge the band for thirty minutes, just like the instructions say. Then you strap it around your head. The sensors press against your forehead – not hard, just enough to make good contact. The band glows blue for a second, then fades to nothing. You close your eyes.

Step Two: The Band Starts Recording

Inside the band is a small computer chip called an EEG sensor. EEG stands for electroencephalogram – that’s just a fancy word for “brain wave reader.” This chip samples your electrical brain activity 250 times per second. Every single second. All night long.

Do the math. 250 samples per second times 60 seconds equals 15,000 samples per minute. Times 60 minutes equals 900,000 samples per hour. A typical night of eight hours gives you 7.2 million samples. Add in the two hours before you fall asleep and the one hour after you wake up, and you’re looking at nearly 10 million data points every single night.

That’s not an exaggeration. That’s the actual number.

Step Three: The App Cleans the Signal

Your brain waves are not the only electrical signals your body produces. Your eyes blink. Your jaw muscles tense. Your heart beats. Your phone, sitting on the nightstand, sends out tiny electromagnetic fields. All of these things create “noise” – signals that are not brain waves but look similar enough to confuse the sensor.

The ZennBand app has a filter that tries to remove this noise. It’s like a voice recognition program that can pick out one person’s voice in a crowded room. The filter is not perfect. It never will be. But it’s good enough to leave behind a “clean” neural signal – a file that represents, as closely as possible, the actual electrical activity of your brain.

Step Four: The App Generates Your Sleep Score

This is the part you see. The app takes your clean neural signal and runs it through a second algorithm that converts raw brain waves into human-friendly numbers. Deep sleep minutes. REM minutes. Time to fall asleep. Number of awakenings. Focus score for the next day.

This is also the part where the app adds value. You feel good when you see a high sleep score. You feel motivated to wear the band again tomorrow. The company wants you to feel that way. Happy customers keep wearing the band. Wearing the band means more data.

Step Five: The Fine Print Activates

Here’s where things get sneaky. When you first installed the ZennBand app, you were shown a screen that said “Terms of Service and Privacy Policy.” There was a blue button at the bottom that said “Accept.” You clicked it. Everyone clicks it.

On page fourteen of the terms of service – a page you never saw – there is a paragraph that reads something like this:

“By using ZennBand, you grant us a perpetual, royalty-free, worldwide license to use, store, analyze, and share de-identified neural data for the purposes of product improvement, research, and commercial partnerships. De-identified data does not include personally identifiable information.”

“De-identified” sounds safe. It sounds like they removed your name, your email, your address. And they did. But here’s the secret that the privacy policy does not explain: de-identified data can be re-identified.

Step Six: The Data Leaves Your Phone

Every night while you sleep, the ZennBand app uploads your cleaned neural signal to the company’s cloud servers. It happens automatically. You don’t have to press anything. You don’t get a notification. By the time you wake up, your brain waves are already on a computer that ZennBand rents from Amazon or Google or Microsoft.

Those cloud servers are located somewhere. Maybe Virginia. Maybe Ireland. Maybe Singapore. You don’t know. You’re not told. The data is stored in a giant database alongside the brain waves of millions of other customers.

Step Seven: The Data Is Sold

ZennBand does not sell headbands as their main business. They sell headbands to get data. The data is the real product.

ZennBand has a data broker partner called NeuroLedger. Every week, ZennBand sends NeuroLedger a file containing the de-identified neural data of everyone who wore a band that week. NeuroLedger pays ZennBand for this file. The price is not public, but former employees have said it’s around $0.50 per person per night. That doesn’t sound like much. But multiply it by one million customers wearing the band every night, and you get $500,000 per week. $26 million per year. Just from selling data. The headband sales are extra.

Step Eight: NeuroLedger Enriches the Data

Here’s where de-identification really breaks. NeuroLedger does not just buy data from ZennBand. They buy data from hundreds of sources. Shopping data from loyalty cards. Location data from phone apps. Social media posts from public profiles. Credit card transactions from data aggregators. Web browsing history from browser extensions.

NeuroLedger has a supercomputer that combines all of these data sources. They look for patterns. A person with certain brain waves also shops at certain stores. A person with certain sleep patterns also posts on social media at certain times. A person with certain focus scores also visits certain websites.

Within hours, the “de-identified” neural data is linked back to real people. NeuroLedger does not need your name. They have a unique identifier – a random string of letters and numbers – that represents you. They know your shopping habits. They know your location history. They know your social media activity. They know your credit score. They know your brain waves.

They just don’t know your name. And they don’t need it.

Step Nine: The Auction

NeuroLedger creates profiles. Each profile contains hundreds of data points about a single person. One of those data points is a “neural risk score” – a number that predicts the likelihood of developing certain mental or neurological conditions.

These profiles are sold at auction. Insurance companies bid against each other. Employers bid against each other. Advertising networks bid against each other. The highest bidder gets access to the profile.

The auction happens in real time. While you are reading this sentence, somewhere in a data center in northern Virginia, your profile is being sold. The winning bidder might be an insurance company testing a new pricing model. It might be a hedge fund betting on healthcare stocks. It might be a political campaign looking for voters who are tired and easily influenced.

Step Ten: The Decision

The winning bidder uses your profile to make a decision about you. If it’s an insurance company, they might raise your rates. If it’s an employer, they might reject your job application. If it’s an advertiser, they might show you a different ad.

You never know. You are never told. You have no way to appeal. You cannot ask to see the data. You cannot correct errors. You cannot delete your profile.

And none of this is illegal.

Part Five: Meet Dr. Anjali – The Neuroscientist Who Blew the Whistle

Not everyone who works in the brain-data industry is comfortable with what they’re building. Meet Dr. Anjali Krishnamurthy. She has a PhD in cognitive neuroscience from a top university. She spent five years as a senior data scientist at a company called NeuroHarvest – yes, that was the real name.

Anjali loved her job at first. She was building algorithms that could predict seizures before they happened. That’s good science. That saves lives. She was proud of her work.

But after two years, NeuroHarvest started selling its algorithms to different kinds of customers. Insurance companies wanted seizure prediction for underwriting. Employers wanted attention-span prediction for hiring. Advertisers wanted vulnerability prediction for targeting.

Anjali went to her boss. She said, “These customers are using our science to hurt people.”

Her boss said, “We’re just providing tools. What people do with tools is their responsibility.”

Anjali disagreed. She quit six months later. Before she left, she copied tens of thousands of internal emails and documents onto an encrypted USB drive. She hid the drive in a safety deposit box. Then she waited.

In 2024, she leaked the documents to a investigative journalist named Mateo Flores. The documents showed that NeuroHarvest knew their “de-identification” was easily reversible. They knew their “anonymized” data could be linked back to real people. They just didn’t care. One internal email from a product manager read:

“We tell customers the data is anonymous because that’s what they want to hear. The truth is, no EEG data is truly anonymous. But if we told them that, they wouldn’t buy the bands. So we don’t tell them.”

Another email, from a sales director to a insurance company executive, read:

“Our neural risk scores are not clinically validated. We cannot guarantee they predict any real medical condition. However, they are statistically correlated with future claims. If you want to raise premiums on high-risk individuals, our scores will give you plausible deniability.”

Mateo Flores published the story on the front page of a major tech news site. The headline read: “Your Brain Waves Are Not Anonymous: Internal Emails Reveal Data Broker’s Secrets.”

Within twenty-four hours, NeuroHarvest’s stock price dropped thirty percent. Within a week, three class-action lawsuits were filed. Within a month, the company announced it was shutting down its consumer data division.

But NeuroHarvest was just one company. A hundred others kept operating. Anjali’s leak was like pulling one weed from a field that stretched to the horizon.

She testified before Congress six months later. She sat at a long wooden table, facing a panel of senators who looked confused and concerned in equal measure.

“You have to understand,” she said, “we are not talking about science fiction. We are not talking about mind reading. We are talking about something more dangerous. We are talking about predictions. Predictions that feel like facts. Predictions that follow people for years. Predictions that cannot be challenged because the algorithms are secret and the data is invisible.”

Senator Markey from Massachusetts asked her, “Dr. Krishnamurthy, if you could wave a magic wand and change one thing about the brain-data industry, what would it be?”

Anjali didn’t hesitate. “Delete the data after thirty days. That’s it. If companies cannot keep brain data for longer than a month, the entire prediction industry collapses. No long-term risk profiles. No permanent neural fingerprints. No lifelong data shadows. Thirty days.”

The senators wrote it down. But writing something down is not the same as passing a law.

Part Six: The Mathematics of Theft – How Much Is Your Brain Worth?

Let me give you some numbers. Not to scare you, but because numbers are honest in a way that words sometimes aren’t.

$2.7 billion. That’s the estimated value of the neural data market by 2027. That’s according to a report from the NeuroRights Foundation, a nonprofit that tracks brain-data privacy. $2.7 billion is more than the GDP of some small countries. It’s more than the annual revenue of Major League Baseball. It’s a lot of money, and it’s coming from your head.

$199. That’s the average price of a sleep or focus band. You pay once. But the company that makes the band can sell your data over and over again, to dozens of buyers, for years. They make more money from your data than you paid for the band. In some cases, they make ten times more.

$0.50. That’s approximately what a data broker will pay for one night of your brain waves. It doesn’t sound like much. But remember, they’re buying from millions of people. And once they have your data, they can combine it with other data to create profiles worth much more.

$47. That’s the average price a insurance company will pay for a complete neuro-risk profile on a single individual. If that profile leads to a premium increase of $500 per year, the insurance company makes back their investment in about five weeks.

85 percent. That’s the accuracy with which sleep band data can predict a major depressive episode. The study that produced this number was published in a peer-reviewed journal. The researchers used data from less than two weeks of recordings.

92 percent. That’s the accuracy with which researchers can re-identify a person from their brain waves. They did this with just five minutes of recording. They used a database of fifty people. In the real world, with millions of people, the accuracy would be even higher.

30 days. That’s the proposed deletion window. If every brain-band company was required to delete raw neural data after thirty days, the entire data-selling industry would collapse. No long-term profiles. No permanent risk scores. No lifelong tracking. Just thirty days of helpful sleep tracking, and then the data disappears.

0. That’s the number of federal laws in the United States that specifically protect neural data as medical information. Zero. The law treats your brain waves the same way it treats your step count. That is a problem.

Part Seven: The Simple Fix – Encryption and Deletion

You’d think the solution would be complex. More laws. International treaties. AI ethics boards with fancy names and expensive consultants.

And yes, we need those things. But there’s a technical fix that works right now. It’s cheap. It’s proven. It’s used by secure messaging apps every single day. And the data-selling industry hates it because it would destroy their business model.

The fix has two parts.

Part One: Encrypt all neural data by default on the device itself.

Right now, most sleep bands send your raw brain signals to the cloud first, then encrypt them later. That’s like writing a secret on a postcard, putting the postcard in a clear envelope, and then handing it to a stranger who promises to put it in a locked box when they get to the other side. The stranger could read your secret at any time. They could copy it. They could sell it.

Encryption at the edge means the band encrypts your brain waves before they ever leave the sensors. The encryption key – the mathematical password needed to unlock the data – lives only on your phone. Not on the company’s servers. Not in the cloud. Just on your device.

When the band uploads encrypted data to the cloud, the company sees only gibberish. Random characters that mean nothing. They cannot analyze it. They cannot sell it. They cannot combine it with other data. It is useless to them.

The only way to decrypt the data is with the key on your phone. That means the only person who can see your brain waves is you – unless you choose to share the key with someone else.

This is not new technology. This is how the messaging app Signal works. This is how ProtonMail works. This is how encrypted backup services work. The only reason brain bands don’t do this is because it would break their ability to sell your data.

Part Two: Automatically delete the decrypted data after thirty days.

Encryption alone is not enough. Even if the data is encrypted in the cloud, you still need to decrypt it to see your sleep scores. And when you decrypt it, the data becomes readable on your phone.

Thirty days is enough time for legitimate uses. You can track your sleep for a month. You can see if a new bedtime routine is working. You can share your data with a doctor. You can export it for personal records.

After thirty days, the app should automatically delete the decrypted data from your phone. Not ask you. Not remind you. Just delete it. The encrypted data in the cloud can stay – but without the key on your phone, it’s useless. And if you lose your phone or get a new one, the old data becomes permanently unrecoverable.

Thirty days is also short enough to prevent long-term profiling. Insurance companies cannot build a risk score from thirty days of data. That’s not enough time to see patterns. Employers cannot make hiring decisions from thirty days of focus scores. Advertisers cannot build a neural vulnerability profile from a single month.

Thirty days is a compromise. It gives you the benefits of brain tracking. It takes away the risks of permanent data harvesting.

Why thirty and not sixty or ninety?

Because most clinical uses of brain data don’t need more than thirty days. If you’re trying to improve your sleep, you’ll see results within a week or two. If you’re tracking a medication’s effects, thirty days is plenty. If you’re monitoring a progressive neurological disease like Parkinson’s or Alzheimer’s, you might need longer – but in those cases, you should give explicit, renewed consent every thirty days. Not a one-time click in a terms of service.

The thirty-day rule is not perfect. But perfection is the enemy of progress. Thirty days is a line in the sand. It says: your brain waves are not permanent property. They are temporary signals. They belong to the moment, not the database.

Part Eight: The Loopholes – How Companies Might Try to Cheat

If a law like the Neural Data Privacy Act ever passes – and that’s a big “if” – companies will look for ways around it. They always do. Let me show you the most likely loopholes so you can spot them.

Loophole One: “Aggregated Data” Loophole

The law might say companies cannot sell individual neural data. But what if they sell aggregated data – averages of thousands of people? Is that allowed?

Here’s the problem. Aggregated data can be “de-aggregated” if the groups are small enough. If a company sells the average brain wave pattern of all NeuroBand users in a small town, and there are only fifty users in that town, you can start to guess individual patterns. With enough statistical tricks, you can reverse-engineer the original data.

The fix: Any aggregated data must come from groups of at least ten thousand people, and the company must certify that no individual can be identified. And even then, the raw data used to create the aggregates must be deleted after thirty days.

Loophole Two: “Consent” Loophole

The law might say companies need your consent to keep data longer than thirty days. So what’s to stop them from putting a pop-up in the app that says, “Click here to allow us to keep your data for research”? And what’s to stop them from making that pop-up appear every time you open the app until you finally click “yes” just to make it go away?

This is called “consent fatigue.” It’s the same trick that cookie pop-ups use on websites. They annoy you until you give in.

The fix: Consent must be “opt-in” – meaning the default is no, and the company cannot ask more than once per month. The request cannot be designed to pressure or annoy you. Violations should carry large fines.

Loophole Three: “Metadata” Loophole

The law might define “neural data” as the raw brain wave signals. But what about the metadata? When you wore the band. How long you wore it. How many times you opened the app. Your average sleep score. All of these are derived from neural data, but they are not the raw signals themselves.

A clever company could say, “We deleted the raw signals after thirty days, but we kept the summary statistics forever.” Those summary statistics might be enough to build a risk profile anyway.

The fix: The thirty-day deletion rule must apply to any data derived from neural signals. Raw signals. Summary statistics. Sleep scores. Focus scores. Everything. If it came from your brain, it goes away after thirty days.

Loophole Four: “Third Party” Loophole

The law might apply only to the company that made the band. But that company could sell the data to a third party before the thirty days are up. The third party is not covered by the law. They can keep the data forever.

The fix: The deletion requirement applies to anyone who possesses neural data, no matter how they got it. If a data broker buys your brain waves on day twenty-nine, they must delete them on day thirty.

Loophole Five: “Research Exemption” Loophole

The law might create an exemption for scientific research. That’s reasonable – research can save lives. But companies will try to stretch the definition of research. “We’re researching how to sell more ads.” “We’re researching how to deny more insurance claims.” “We’re researching how to manipulate tired people.”

The fix: Research exemptions must require approval from an independent ethics board. The board must include patient advocates and privacy experts. The research must be published in a peer-reviewed journal within a reasonable time. And participants must give explicit, informed consent – not a hidden checkbox.

These loopholes are not hypothetical. They have been used in other privacy laws. The California Consumer Privacy Act has a research exemption that some companies have exploited. The European Union’s GDPR has a consent loophole that adtech companies use every day. We need to learn from those mistakes before writing the neural data law.

Part Nine: Emma’s Fight – From Angry Customer to Activist

Remember Emma from the beginning? She didn’t stay silent after that insurance email. She got angry. And then she got organized.

The first thing she did was request her data from NeuroBand. Under California’s privacy law – the CCPA – she had the right to ask for a copy of all the data the company had about her. She filled out an online form. She waited thirty days. A file arrived in her email.

The file was enormous. Over twelve thousand rows in a spreadsheet. Each row represented one data point from her brain. There were columns she didn’t understand: “theta_power_4hz,” “delta_amplitude,” “alpha_asymmetry.” There were columns that made her skin crawl: “anxiety_probability,” “fatigue_index,” “attention_drift_rate.”

She found a graduate student in neuroscience at the local university who agreed to help her understand the file. The student’s name was Miguel. He spent three evenings going through the spreadsheet with Emma.

“This is not anonymized,” Miguel said. “Not even close. I can tell which days you had coffee and which days you didn’t. I can tell which nights you drank alcohol. I can tell when you had an argument with your husband. It’s all here.”

Miguel showed her the “third party” column. Every time NeuroBand sold her data, they logged the buyer’s name. Emma’s data had been sold seven times in six months. The buyers included:

- VeriNeuro – a data broker she had never heard of

- Actuarial Insights Group – an insurance analytics firm

- MindMatch – a job recruiting platform

- Haven Legal – a divorce law firm

- AdNeuro – the advertising company that targets tired brains

- NeuroPredict – a healthcare risk scoring company

- SleepCorp – a competitor sleep band company

Emma was shocked by the divorce law firm. She called Haven Legal the next day. A receptionist answered.

“Why did you buy my brain data?” Emma asked.

“I’m not authorized to discuss that,” the receptionist said.

“I’m a customer. You have my data. I want it deleted.”

“Please hold.”

She was on hold for eighteen minutes. Then a lawyer came on the line. He explained that Haven Legal bought neural stress data to identify potential clients. People with high stress patterns, he said, were more likely to need divorce services.

“But I’m not getting a divorce,” Emma said.

“The algorithm doesn’t know that,” the lawyer replied. “It just sees patterns.”

Emma hung up. She sat at her kitchen table for a long time. Then she opened a new document and started typing.

“My name is Emma Chen. I bought a sleep band to help with my insomnia. I did not buy a lifetime membership to a data surveillance program. But that’s what I got.”

She posted her story on a parenting forum. Within twenty-four hours, three hundred people had replied. Many of them had similar experiences. One woman’s life insurance premium had doubled after she started using a focus band. One man’s employer had asked him to “take a leave of absence” after his brain data showed signs of burnout. One teenager’s college application was flagged for “cognitive irregularities” based on a sleep band she wore for a science fair project.

Emma started a group called “My Brain Is Not a Product.” She made a website. She made a Facebook page. She made a TikTok account. The TikTok account took off. Her first video – a simple animation showing a brain with dollar signs on it – got two hundred thousand views. Her second video – a skit where she played a data broker selling someone’s dreams – got half a million.

Within three months, the group had forty thousand members. People shared screenshots of privacy policies. They built a public spreadsheet of which bands delete data and which ones sell it. They created form letters that anyone could send to their representatives.

Then Emma got a message from someone inside one of the largest brain-band companies. The person was scared. They didn’t want to be named. But they had access to internal emails. Hundreds of them.

The emails showed that executives knew their “de-identification” was easily reversible. They knew that researchers had warned them. They knew that customers would be upset if they found out. They just didn’t care.

One email from the CEO to the head of product read: “The privacy people are being paranoid. Nobody reads terms of service. Nobody cares about data. Just keep selling bands.”

Emma sat on the emails for two weeks. She didn’t know what to do. She wasn’t a journalist. She wasn’t a lawyer. She was just a tired mom who bought a headband.

She reached out to Mateo Flores – the journalist who had broken the NeuroHarvest story. Mateo verified the emails. He published the story on a Tuesday morning.

By Tuesday afternoon, the company’s stock had dropped fifteen percent. By Wednesday, the FTC had opened an inquiry. By Friday, two senators had announced a draft bill: the Neural Data Privacy Act.

Emma was invited to testify at a hearing. She sat in the same chair where Dr. Anjali had sat six months earlier. She looked at the senators. She took a deep breath.

“I’m not an expert,” she said. “I’m not a lawyer. I’m not a neuroscientist. I’m a mom who couldn’t sleep. And I accidentally sold my brain to strangers. That should not be possible. That should not be legal. Fix it.”

The senators applauded. Emma cried.

The Neural Data Privacy Act is not law yet. It might never be. But it’s the first real attempt to protect brain data in the United States. The core of the bill is exactly what Anjali recommended: encryption by default, deletion after thirty days.

Emma still wears her NeuroBand sometimes. But now she knows where the data goes. And she’s fighting to make sure everyone else knows too.

Part Ten: The Global Picture – What Other Countries Are Doing

The United States is not the only country wrestling with brain-data privacy. Around the world, different governments are taking different approaches. Some are ahead. Some are far behind.

Chile – The World Leader

Chile is the first country in the world to amend its constitution to protect brain data. In 2021, the Chilean Senate voted to add a “neurorights” amendment to the constitution. The amendment says that neural data is a special category of information that cannot be sold, traded, or manipulated without explicit, informed, and revocable consent.

Chile is also considering a “mental privacy” law that would require all brain-band companies to delete data after sixty days – twice as long as the thirty-day rule, but still much shorter than forever.

The Chilean example is important because it shows that change is possible. If a country with a small tech industry can pass a neurorights law, richer countries have no excuse.

European Union – The GDPR Framework

The European Union already has the strongest general privacy law in the world: the General Data Protection Regulation, or GDPR. Under the GDPR, brain data might be considered “special category data” – the same as health data or biometric data. Special category data requires explicit consent and cannot be used for automated decision-making without human review.

But the GDPR was written in 2016, before brain bands were common. It does not mention neural data specifically. Some EU regulators argue that the existing law is enough. Others say a new “NeuroGDPR” is needed.

The EU is also considering a ban on “neuro-discrimination” – using brain data to make decisions about employment, insurance, or credit. That ban would be similar to existing bans on genetic discrimination.

United Kingdom – The Wake-Up Call

The UK is in the middle of a national debate about brain data after a government report revealed that the National Health Service had been sharing anonymized patient data – including some brain data – with private companies without telling patients.

The report led to the creation of a “Neuroethics Advisory Board.” The board has recommended a thirty-day deletion rule for all non-clinical neural data. It has also recommended criminal penalties for companies that re-identify anonymized brain data.

China – The Surveillance State

China has the world’s largest market for brain bands. Millions of Chinese citizens wear sleep and focus bands, some of them required by employers or schools. The Chinese government has access to much of this data.

In 2022, China passed a new data privacy law that says companies cannot collect “sensitive personal information” without consent. But the government exempted itself from the law. Police, military, and intelligence agencies can access brain data without a warrant.

Chinese companies are also developing “brain-computer interfaces” for workplace monitoring. One factory in Shenzhen requires workers to wear focus bands during shifts. If the band detects low attention, the worker is sent home without pay for the day.

Brazil – The Late Bloomer

Brazil passed a comprehensive privacy law in 2018 called the LGPD. Like the GDPR, it protects health data. But the Brazilian government has not clarified whether neural data counts as health data.

A bill currently in the Brazilian Congress would explicitly add neural data to the list of protected information. The bill also includes a ninety-day deletion rule – longer than thirty, but still an improvement over forever.

India – The Wild West

India has no specific laws protecting brain data. The country’s privacy law, passed in 2023, covers “personal data” but does not mention neural information. Indian brain-band companies are among the most aggressive data sellers in the world, in part because there are no penalties for misuse.

A group of Indian neuroscientists has proposed a “NeuroPrivacy Framework” that would establish a thirty-day deletion rule and ban the sale of neural data to insurance companies. But the framework has not been adopted by the government.

What This Means for You

If you live in Chile, you have strong protections. If you live in the European Union, you have decent protections, though they are not brain-specific. If you live in the United States, you have almost no protections unless you live in California, which has a privacy law that is weaker than the GDPR. If you live in India or Brazil, you are largely on your own.

But here’s the important thing: brain data does not respect borders. A company in India can sell your data to a buyer in the United States. A band made in China can ship to Europe. The laws of your country might not apply to companies based elsewhere.

That’s why a global standard is needed. The thirty-day deletion rule is simple enough to work anywhere. Encryption at the edge is a technical solution that doesn’t depend on laws. The technology exists. What’s missing is the will to use it.

Part Eleven: What You Can Do Tonight – The Action Guide

You don’t have to throw your band in the trash. You don’t have to live without sleep tracking. But you do need to take action – tonight, not tomorrow – to protect your brain waves.

Here is a step-by-step action guide. Do as many of these as you can.

Action One: Check Your Band’s Privacy Settings Right Now

Open the app for your sleep or focus band. Go to the settings menu. Look for “Privacy,” “Data Sharing,” or “Research.” Turn off anything that says “share anonymized data,” “contribute to research,” or “allow third-party access.”

Even if the setting says “anonymized,” turn it off. Remember: anonymized can be re-identified.

If you cannot find the privacy settings, search the company’s website for “how to opt out of data sharing.” If you still cannot find it, email the company. Use the template below.

Action Two: Delete Your Historical Data

Most brain-band apps have a button that says “Delete my data” or “Clear history.” Find it. Click it. Delete everything older than thirty days.

If the app does not have a delete button, email the company and demand one. Under California law and GDPR, you have the right to delete your data. If the company refuses, file a complaint with your state attorney general or your country’s privacy regulator.

Action Three: Request a Copy of Your Data

Under many privacy laws, you have the right to request a copy of all the data a company has about you. This is called a “data subject access request.” It is usually free.

Send the request by email. Keep a copy. The company has thirty days to respond. When you receive the data, look for three things:

- Third-party recipients – Who has bought or received your data?

- Retention period – How long does the company keep your raw brain signals?

- Risk scores – Has the company calculated any predictions about your health or behavior?

If you find anything concerning – like a risk score for anxiety or depression – document it. Take screenshots. Save the emails. You may need them later.

Action Four: Send This Email to Your Band’s Company

Copy and paste this message. Fill in the blanks. Send it to the company’s privacy email address (usually privacy@companyname.com).

Subject: Question about neural data retention and sharing

To: Privacy Team

From: [Your Name]

Customer since: [Month, Year]

Device model: [Band name and model]

I am a customer of your product. I have the following questions, and I expect written answers within thirty days.

- Does my neural data ever leave your servers? If yes, to whom is it sold or shared?

- How long do you keep my raw brain signals? Do you delete them after a certain period?

- Do you create any “risk scores,” “predictions,” or “inferences” about my mental or neurological health?

- If I request deletion of all my data today, how long will it take?

- Do you use encryption at the edge? If not, why not?

I will share your answers publicly. Thank you for your transparency.

Action Five: Protect Your Phone

Your phone holds the encryption keys for your brain data. If your phone is compromised, your brain data is compromised. Do these three things tonight:

- Update your phone’s operating system – Security patches fix known vulnerabilities.

- Use a strong passcode – Six digits minimum. Do not use your birth year or 123456.

- Turn on two-factor authentication for your email and your band’s app account.

Action Six: Talk to Your Doctor

If you have any concerns about how your brain data might be used against you in healthcare or insurance, talk to your doctor. Ask them to document in your medical record that you have not been diagnosed with any condition that might appear in your brain data.

This sounds paranoid. It’s not. Insurance companies have used sleep band data to deny coverage for conditions that were never diagnosed by a doctor. A note in your medical record can help you fight back.

Action Seven: Join a Group

Emma’s group, “My Brain Is Not a Product,” is real. So are similar groups in other countries. Find one. Join it. You don’t have to be an activist. You just have to pay attention.

When these groups get large enough, companies notice. Politicians notice. Laws change.

Action Eight: Contact Your Representative

Find your national or state representative’s email address. Send them this message:

Subject: Please support the Neural Data Privacy Act (or similar legislation)

Dear Representative [Last Name],

I am your constituent. I live at [your address]. I am writing to ask you to support laws that protect neural data from being sold without consent.

Specifically, I ask you to support:

- Encryption of neural data on the device before transmission

- Automatic deletion of raw neural data after 30 days

- A ban on using neural data for employment, insurance, or credit decisions

Please let me know where you stand on this issue. Thank you for your service.

Action Nine: Spread the Word

Share this article with one person tonight. Just one. Send it to a friend who wears a sleep band. Post it in a group chat. Print it out and leave it at a coffee shop.

Most people have no idea their brain data is being sold. You can change that. One conversation at a time.

Action Ten: Make a Personal Pledge

Decide right now what your personal boundary is. Will you continue wearing your band? Will you delete data every thirty days manually? Will you switch to a band that promises not to sell data?

Write down your pledge. Put it on your refrigerator. Tell your family. Accountability helps.

Here is a sample pledge:

I, [your name], understand that my brain waves are personal and private. I will not sell them. I will not share them without thinking carefully. I will delete my neural data every thirty days. My brain belongs to me.

Part Twelve: The Future – Two Worlds, One Choice

It’s 2028. Four years from now. Two possible worlds exist. You will live in one of them. The choice is being made right now, by people like you, in decisions that seem small.

World A – The Harvest World

In World A, brain bands are everywhere. Children wear them in school to track attention. Workers wear them in offices to track productivity. Elderly people wear them in nursing homes to track cognitive decline.

The data never disappears. Every brain wave you have ever produced – from your first sleep band at age twenty-five to your last breath at age ninety – is stored in a permanent database. Insurance companies have access. Employers have access. Advertisers have access. The government has access.

You cannot get a job without submitting six months of brain data. You cannot get health insurance without a neural risk score. You cannot get a mortgage without proving your cognitive stability. Your brain is an asset – but not yours. It belongs to the database.

When you are tired, ads for energy drinks appear on your phone, your computer, your refrigerator screen. When you are sad, you get targeted for expensive therapy apps you didn’t ask for. When you are stressed, your employer is notified before you are.

People in World A accept this because they don’t remember anything different. The bands came out when they were young. The data selling was always there. They think it’s normal. They think it’s inevitable.

It is not inevitable.

World B – The 30-Day World

In World B, the Neural Data Privacy Act passed. Or something like it passed in your country. The rules are simple: encrypt at the edge, delete after thirty days.

You can wear a band if you want. It helps you sleep better. It helps you focus. It helps you understand your brain. Every thirty days, the data vanishes like morning fog. You cannot sell it because it doesn’t exist long enough to sell. You cannot build permanent profiles because there is no permanent data.

Companies that try to sell neural patterns go bankrupt because there’s no market. The honest companies – the ones that sell headbands and services, not data – thrive. They compete on quality, not on surveillance.

Emma’s group becomes a nonprofit. They teach a class in every middle school called “Your Brain Belongs to You.” Kids learn that brain waves are like fingerprints – unique, personal, not for sale.

Anjali’s research is used to help people with real medical conditions. Seizure prediction. Sleep disorder diagnosis. Brain injury recovery. The data is used for healing, not for harvesting.

Carlos gets a job – not at the company that rejected him, but at a better one. He wears his focus band during work. He knows his data will disappear in thirty days. He feels safe.

Delia – the retired nurse – still wears her simple sleep band. Her daughter bought it for her. She doesn’t worry about fraud anymore because her brain data cannot be stolen. There’s nothing to steal.

Which World Will You Live In?

World A is the default. It’s where we are heading right now, at full speed, unless we change direction.

World B requires action. It requires people to speak up. It requires companies to change their business models. It requires laws to be written and enforced.

The good news is that World B is not a fantasy. The technology exists. The laws are being drafted. The activists are organizing. Emma is real. Anjali is real. Carlos is real. They are not characters in a story. They are people who decided to fight back.

You can join them.

Part Thirteen: The Last Raw Signal – A Story You Will Remember

One more story, and then I’ll let you go.

A few months ago, I met a seventy-one-year-old retired nurse named Delia O’Connor. She had no smartphone. No social media. No email address. She lived in a small apartment with a cat named Muffin and a television that only got four channels.

But Delia used a sleep band. Her daughter bought it for her – a simple one, not fancy, just a soft strap with a single sensor. Delia had mild insomnia. The band helped her see patterns. She learned that she slept better when she didn’t watch the news before bed. Small win.

One morning, Delia got a call from her bank’s fraud department. The bank was called First Federal. The caller said, “Mrs. O’Connor, someone tried to open a credit card in your name last night.”

“That’s impossible,” Delia said. “I haven’t applied for anything.”

“We know,” the caller said. “The application was flagged because the security answers didn’t match your file. The person trying to open the card knew your name, your address, and your social security number. But they didn’t know your behavioral biometrics.”

“My what?”

“Your sleep patterns. The security questions were: what time do you usually wake up? How many times do you get up at night? Do you snore? The answers were wrong.”

Delia was confused. “How would anyone know my sleep patterns?”

The caller was quiet for a moment. Then she said, “Mrs. O’Connor, do you use a sleep tracking device?”

Delia looked at the gray band on her nightstand. “Yes,” she said. “My daughter bought it for me.”

“That data was sold to a broker. The broker was hacked. The hacker sold your sleep patterns on the dark web. That’s how the fraudster got the answers.”

Delia hung up. She sat in her armchair with Muffin on her lap. She looked out the window at the oak tree in her yard. She had lived in that apartment for thirty-two years. She had raised two children there. She had buried her husband from there. And now, in her seventies, she had learned that her brain – the one thing that had always been hers, the one thing no one could take – had been sold to strangers.

She didn’t understand encryption. She didn’t understand cloud servers. She didn’t understand data brokers. But she understood one thing, and she said it to me with a voice that was tired but not broken.

“I thought I was buying help sleeping. Turns out I was selling my soul by the megabyte.”

Delia threw the band in the trash that afternoon. She called her daughter and told her never to buy anything that touches her head again.

Delia is not an activist. She’s not a lawyer. She’s not a senator. She’s a retired nurse who just wanted to sleep.

But she is the reason we need to act. Because the next Delia could be your mother. The next Emma could be your sister. The next Carlos could be you.

The fix is not complicated. It’s not expensive. It’s not waiting on some distant technology.

Encrypt all neural data at the source. Delete it after thirty days.

That’s the line in the sand. Draw it.

Afterword: The Words That Matter

If you take nothing else from this article, remember these terms. They are not for a search engine. They are for your own protection.

Neural data harvesting – The practice of collecting and selling brain wave information without meaningful consent. This is happening right now, to millions of people.

Brain fingerprinting – Using EEG patterns to uniquely identify a person. Your brain waves are more accurate than your actual fingerprints for identification purposes.

Cognitive liberty – The proposed human right to control your own brain data. Some countries are already adding this to their constitutions.

Theta wave exploitation – Targeting advertisements or decisions based on drowsy, less-defensive mental states. This is the advertising loophole that no one is talking about.

Thirty-day neural deletion – The proposed standard for automatic erasure of raw brain signals. This is the single most effective protection against data harvesting.

Edge encryption – Encrypting brain data on the wearable device before it ever touches the cloud. This is the technical fix that breaks the data-selling business model.

NeuroRights Foundation – The leading global group pushing for five basic brain-data rights, including deletion windows and informed consent.

My Brain Is Not a Product – The grassroots campaign that started with Emma Chen. You can find them online. You can join them.

Final Words

You have read a long article. You have learned about Emma, Carlos, Anjali, and Delia. You have seen how brain bands work, how data is sold, and how a simple fix could change everything.

Now you have a choice.

You can close this tab and forget you ever read it. The bands will keep selling. The data will keep flowing. The insurance companies will keep raising rates. The advertisers will keep targeting tired brains.

Or you can act.

Check your privacy settings. Delete your historical data. Email the company. Join a group. Contact your representative. Share this article with one person.

Thirty days. That’s all it takes to break the cycle. Thirty days of encryption and deletion, and the whole data-harvesting industry collapses.

Your brain belongs to you. Not to NeuroBand. Not to ZennMind. Not to AuraTrack. Not to any data broker in Virginia or Singapore or Mumbai.

Your brain belongs to you.

Act like it.